Using Outside Air and Evaporative Cooling to Increase Data Center Efficiency

BlogFuture Power & Cooling Requirements of Facility Infrastructure

Ten years ago, it was all about the gigahertz, as Intel and AMD raced to put out ever faster processors. These days it’s all about the gigawatts, how to cut the power used to run all those processors. Jonathan Koomey, Ph.D. of Lawrence Berkeley National Laboratory, estimated that worldwide data center power consumption was 152.5 Billion kWh in 2005, more than double the 2000 consumption.

In 2007, the US EPA estimated that servers and data centers “consumed about 61 billion kilowatt-hours (kWh) in 2006 (1.5 percent of total U.S. electricity consumption) for a total electricity cost of about $4.5 billion.”

Despite improving efficiency trends, national energy consumption by servers and data centers could nearly double again in another five years (i.e., by 2011) to more than 100 billion kWh (Figure ES-1), representing a $7.4 billion annual electricity cost. (Report to Congress on Server and Data Center Energy Efficiency Public Law 109-431 U.S. Environmental Protection Agency ENERGY STAR Program August 2, 2007).

While computer usage has continued to rise since 2005/2006, two trends have cut into the growth of data center power usage. The first is more efficient servers and server architectures, including multicore chips running at slower clock speeds and the use of virtual machines to reduce the number of physical servers needed.

The other trend is the use of a more efficient data center infrastructure, by lowering the amount of energy needed to cool the servers. Traditional compressor-based chilled air computer room air conditioning (CRAC) units are being supplemented by the water-cooling of servers, as well as the use of outside air for cooling purposes.

But there is a largely overlooked method of cooling the data center which is cheap and easy to implement – using the natural cooling power of evaporation to bring both temperature and humidity into the desired range. The reality is that such a high-pressure fogging system with a 10 HP motor could replace a 500 ton chiller. The capital cost, operating expense and parasitic load of evaporative cooling are a tiny fraction of those of traditional chiller units.

Using fogging to control temperature and humidity is not a new or speculative cooling technology, but has been in use for decades. As data center operators look for ways to cut their operating costs and greenhouse gas emissions, it is becoming a more popular cooling choice for facilities such as the new data center Facebook is building in Oregon.

Water and Air Cooling for Data Centers

Water and air have long been the two methods used to transfer heat from data center equipment to the outside world. Water was the early choice for mainframes. The UNIVACs of the early 50s used water to cool thousands of vacuum tubes. Perhaps the first overheating incident occurred with the UNIVAC I at the US Steel facility in Gary, Indiana, when a fish got stuck in the pipe being used to pull water from Lake Michigan.

But when processors switched to cooler-running chips, water fell out of style, and IBM stopped manufacturing water cooled units in 1995.

For many years, then, just bringing the room air temperature down was enough, and was safer and more efficient than trying to pipe water to each component in the expanding array of servers, storage and networking gear. But with the advent of high-density computing architectures such as multiprocessor rack units and blade servers, normal room air conditioning no longer fitted the bill. Pinpoint cooling systems were needed to address localized hot spots. Water, which has 3,500 times the heat transfer capacity per unit of volume as air, made a comeback.

The approaches varied. American Power Conversion Corporation created the InfraStruXure line of racks, which bring water to radiators in the racks to cool the air. Liebert Corporation’s XD products take a similar approach, but some models use a piped refrigerant instead of water. IBM and Hewlett-Packard also have water-cooled heat exchangers for their racks.

Other companies have developed technologies that use liquid to directly cool components, without using air as an intermediary. For example Clustered Systems Company manufactured a rack system that uses cooling plates that sit above the equipment. The servers are modified to remove the fans and instead of using heat sinks, the chips have heat risers to transfer the heat to the cooling plate.

Those plates are connected to a Liebert heat exchanger. IBM’s Hydro-Cluster technology uses water cooled copper plates placed directly above the processors. Emerson Network Power’s Cooligy Group uses fluid moving through micro channels in a silicon wafer to draw the heat away from a CPU.

Water and Evaporative Cooling

Each of these water-based cooling approaches, however, only uses the ability of water to absorb large quantities of heat in its liquid form. More effective cooling comes from using the energy absorbed by evaporating water. Just look at the numbers: It takes 100 calories to warm one gram of water from freezing to the boiling point (0ºC to 100ºC.).

It takes an additional 539 calories to vaporize that gram of water as steam. Fogging Systems use the adiabatic process, meaning no energy is added to the air for the vapor change, so evaporating that gram of water actually removes energy (which is also known as evaporative cooling).

However, you do not want to spray the water directly onto the chips, nor do you want the chips to be hot enough to boil the water. Fortunately, the adiabatic cooling process can be easily applied to existing or new air handling systems by spraying a fine fog of water into the air stream. There it evaporates, thus lowering the temperature and raising the humidity of the air that is used to cool the data center.

New ASHRAE Standards

Fogging ties nicely into the new standards for data centers issued by the American Society of Heating, Refrigerating and Air-conditioning Engineers (ASHRAE). The 2008 ASHRAE Environmental Guidelines for Datacom Equipment expanded the recommended inlet air temperature range.

Under the previous ASHRAE guidelines issued in 2004, the inlet temperatures were recommended to be in a range of 20 to 25ºC (68ºF to 77ºF) with a relative humidity range of 40% to 55%. The ASHRAE Technical Committee 9.9 (TC 9.9) reviewed current information from equipment manufacturers and extended the recommended operating range from a low of 18ºC (64.4ºF) to a high of 27ºC (80.6ºF).

According to the Guidelines, “For extended periods of time, the IT manufacturers recommend that data center operators maintain their environment within the recommended envelope. Exceeding the recommended limits for short periods of time should not be a problem, but running near the allowable limits for months could result in increased reliability issues.”

TC 9.9 took a different approach with regard to humidity. Whereas the previous version specified the range in terms of relative humidity, the new guidelines concentrate on the dew point – a low end of 5.5ºC (41.9ºF) to a high end of 60% relative humidity and a 15ºC (59ºF) dew point: “Having a limit of relative humidity greatly complicates the control and operation of the cooling systems and could require added humidification operation at a cost of increased energy in order to maintain an RH when the space is already above the needed dew point temperature.”

The upper limit was based on testing of printed circuit board laminate materials which found that, “Extended periods of relative humidity exceeding 60% can result in failures, especially given the reduced conductor to conductor spacings common in many designs today.” The lower limit is set primarily to minimize the electrostatic buildup and discharge that can occur in dry air, resulting in error conditions and sometimes equipment damage.

In addition, low humidity can dry out lubricants in motors, disk drives and tape drives, and cause buildup of debris on tape drive components.

Both the temperature and humidity should be measured at the inlet to the equipment. Typically equipment at the top of a rack will have higher inlet temperature and lower relative humidity than equipment nearer the floor.

Exceeding the Envelope

The 2008 ASHRAE standards were primarily concerned with equipment reliability. However, the changes were also designed to cut cooling and humidification costs by allowing increased use of outside air economizers. The recommended temperature and humidity guidelines are conservative.

ASHRAE also provides a set of allowable temperature and humidity ranges for equipment which are much broader. As the Guidelines state, “For short periods of time it is acceptable to operate outside this recommended envelope and approach the extremes of the allowable envelope.

All manufacturers perform tests to verify that the hardware functions at the allowable limits. For example if during the summer months it is desirable to operate for longer periods of time using an economizer rather than turning on the chillers, this should be acceptable as long as this period of warmer inlet air temperatures to the datacom equipment does not exceed several days each year where the long term reliability of the equipment could be affected.”

These guidelines also recognize that in a highly redundant data center that can tolerate some equipment failure, it may even make sense to use outside air and operate at higher temperatures, even though there is a risk of some servers failing. They state: “This, of course, would be a business decision on where to operate within the allowable and recommended envelopes and for what periods of time.” Google, for example, is known to operate its data centers at temperatures above the ASHRAE recommendations in order to cut operating costs.

Making Economizers More Economical

Building managers have been using fogging systems as an economical method to control temperature and humidity for decades. The new ASHRAE guidelines and the broader industry push to use more outside air make fogging an ideal option for cooling data centers. The use of outside air economizers in data centers has been limited by temperatures or humidity exceeding the range needed. Fogging handles both issues at a far lower initial and operating cost than other methods of cooling and humidification.

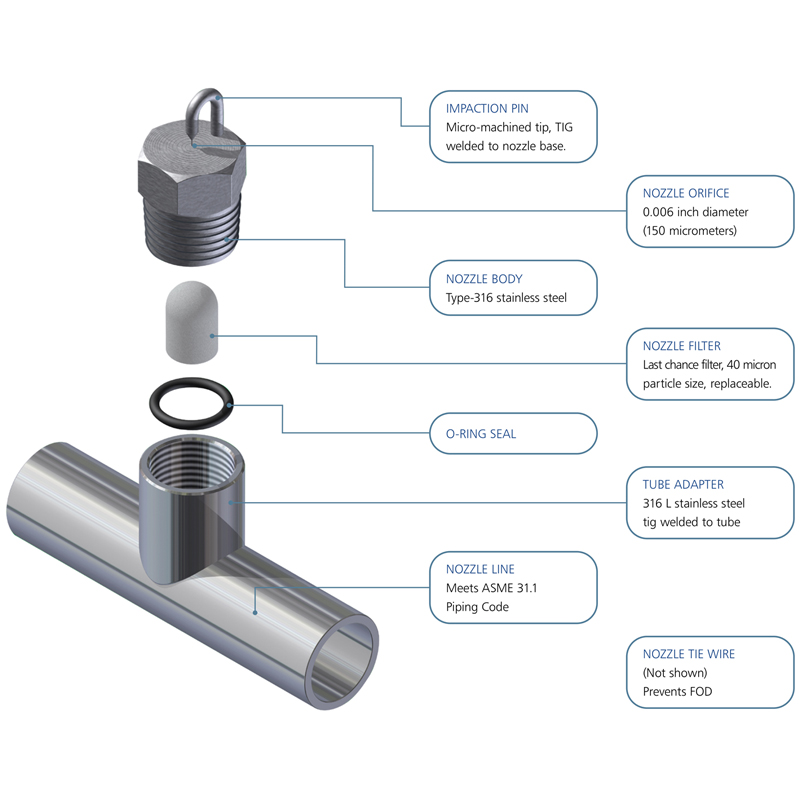

The typical high-pressure fogging system consists of a high-pressure pump, which delivers demineralized water at 1,000 to 2,000 psi to a series of fog nozzles installed inside the air duct. A typical fog nozzle has an orifice diameter of five to seven thousandths of an inch.

Water jets out of the orifice and hits an impaction-pin, which breaks the water stream up into billions of micron-sized droplets. Fog nozzles create as many as five billion super-fine fog droplets per second, with droplets averaging about 5 microns in diameter.

High-pressure fog systems can be controlled by using solenoid valves to turn on stages of fog nozzles or by controlling the speed of the high-pressure pump with a variable frequency drive. The latter method offers the most precise control of humidity but is generally more expensive.

- Uses one horsepower for every 600 lbs. of water/hour

- One horsepower fog system replaces a 50 ton chiller

- Lower maintenance costs

The space requirement for the high-pressure pump system is minimal and the first cost is often less than that of both steam and compressed air systems. Maintenance costs are also relatively low, mainly involving replacement of water filters and service to the high-pressure pumps. The biggest advantage of high-pressure cold-water fogging, however, is running costs.

A typical fog system uses one horsepower for every 600 lbs. of water per hour. Since evaporating one pound of water at room temperature removes approximately 1100 BTUs, each horsepower of pumping removes about 615,000 BTUs/Hour, which is over 64 tons of cooling. A fogging system with a 10 HP motor, therefore, could replace a 500 ton chiller.

Fogging systems can be part of a new data center design or can be installed in existing data centers, often without requiring a shutdown of the air handling equipment. Using a fogging system allows the data center to extend the use of outside air economizers to times when the weather conditions would require use of chillers.

The MeeFog Fog Nozzle

Depending on the local climate or due to air quality issues, however, not all data centers choose to use economizers. Even so, fogging can still reduce cooling costs through indirect cooling without adding any humidity to the data center air by cooling the air stream of Air Cooled Condensers (ACCs).

A fogging system increases refrigeration capacity during periods of high ambient temperatures by evaporative cooling the inlet air of the ACC. The capacity increase and electrical demand reduction is significant. To achieve the desired result, the design capacity of the evaporative cooling system must be sufficient to over-cool the air entering the condenser, the objective being to maximize the cooling effect and to wet the condenser tubes. The additional capacity can allow up to 15-20% of existing compressors to be valved off if the facility does not need the increased capacity.

MeeFog Case Study – Fogging Systems for Facebook’s Data Center

Fogging systems have been used for building cooling and humidification for decades. In January 2010, Facebook announced the construction of its first company-owned data center, one that will use evaporative cooling.

“This system evaporates water to cool the incoming air, as opposed to traditional chiller systems that require more energy intensive equipment,” wrote Facebook vice president of technical operations, Jonathan Heiliger, in a blog post announcing the new data center. “This process is highly energy efficient and minimizes water consumption by using outside air.”

The 147,000 square foot facility in Prineville, Oregon is designed to meet the U.S. Green Building Council’s requirements for Leadership in Energy and Environmental Design (LEED) Gold certification and to attain a Power Usage Effectiveness (PUE) rating of 1.15. The PUE is calculated by taking the total amount of energy used by the data center divided by the amount of power used by the computing equipment (servers, workstations, storage, networking, KVM switches, monitors).

A PUE of 1.0 would mean 100% efficiency – no power at all used by UPS modules, Power Distribution Units (PDUs), Chillers, Computer Room Air-conditioning (CRAC) units or lights. A PUE of 1.15 means that for every 115 watts going to the data center, 100 watts goes to the computing equipment and only 15 watts goes to the infrastructure. To put this in perspective, the Uptime Institute estimates a typical data center has a PUE of about 2.5.

“The facility will be cooled by simply bringing in colder air from the outside,” wrote Heiliger. “This feature will operate for between 60 percent and 70 percent of the year. The remainder of the year requires the use of the evaporative cooling system to meet temperature and humidity requirements.”

On July 30, Facebook announced that it would build an additional 160,000 square foot shell at its Prineville facility, outfitting it with servers as needed to meet expansion needs. “We are making excellent progress on the first phase of our Prineville Data Center and we are hoping to finish construction of that phase in the first quarter of 2011,” said Tom Furlong, Director of Site Operations for Facebook.

“To meet the needs of our growing business, we have decided to go ahead with the second phase of the project, which was an option we put in place when we broke ground earlier this year. The second phase should be finished by early 2012.”

Contact Us For More Information

If you are interested in data center cooling solutions contact us to request a quote or if you have any questions feel free to request more information. We also provide data center humidification solutions.