Technologies for Improving Power Usage Efficiency Ratings

Data Centers

Facebook’s Pineville, Oregon data center has an entire floor devoted to pulling in the outside air, filtering it, cooling and humidifying it, and then sending it down to the floor where the servers are located.

Challenge

To design and build an energy efficient 147,000 sq. foot data center that can operate year-round without using mechanical cooling, even when summer temperatures reach 110°F. Proper data center cooling was needed to achieve optimal energy levels.

Solution

Use a custom designed MeeFog system, consisting of 28 fogging units and more than 6600 nozzles to provide the exact levels of cooling and humidification

Facebook Data Center in Prineville, Oregon

Prineville is in a high-desert region, where the rainfall averages just 10” per year and the summer temperatures are in the 80s and 90s. Rather than using mechanical chillers or in row cooling units, the 147,000 square foot facility relies completely on outside air and a Mee fog cooling and humidification system to keep the temperature and humidity within the desired range.

Setting the Standard for Energy Efficiency

In search of better ways of removing heat from data center components, many companies use designs that bring the cooling right to the rack or even the individual component in the rack, rather than relying on bringing the room temperature to the desired level. Facebook took the opposite approach, bringing the entire data center to the desired temperature and completely eliminating fans in the racks and servers.

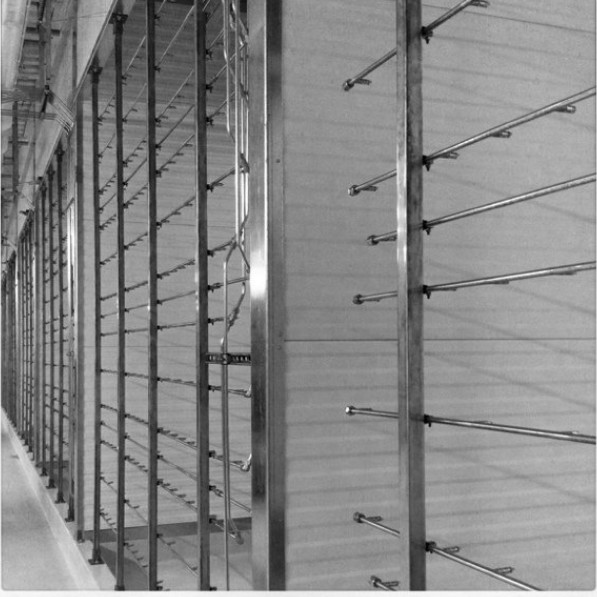

The data center was designed to operate at 80.5° F – in accordance with aSHRaE (american Society for Heating, Refrigeration and air Conditioning Engineers) standards for data centers – and to maintain that temperature even when the temperatures hit 110° F, four degrees above the highest temperature recorded in Prineville in the last 50 years. to achieve the desired result, a MeeFog system was designed consisting of 56 7.5 hp positive displacement fog pump units with variable frequency drives, each providing 7.62 gpm@ 1000 psi. two pumps serve each of the data center’s 28 air handling units, one on active duty, the other on standby, with automatic switch over. these pumps send the water through stainless steel tubing to an array of impaction-pin nozzles which convert that water into a fine fog designed to rapidly evaporate, bringing the air down to the desired temperature and providing the required level of humidity.

the results were remarkable. a standard used to measure data center efficiency is the Power Utilization Effectiveness (PUE) rating developed by the Green Grid consortium. PUE is calculated by dividing the total power the data center uses by the amount used directly by the it equipment. a PUE of 1.00 would mean that every single watt was going to the it equipment. Data centers worldwide tend to average around 2.0 to 2.5 PUE, while Google manages to achieve a stellar average PUE of 1.13. Facebook designed its data center to achieve a PUE of 1.15, but when it tested its data center in December 2010, it had a PUE of 1.06. For every 100 watts going to the computing equipment, only six watts goes to cooling, lighting, UPS and power distribution.

Telling The World

One of Facebook’s defining characteristics is exposing information to the world, and it took that same approach with its data center design. Once the data center was up and running, Facebook announced the creation of the Open Compute Project (opencompute.org) which makes publicly available all the server and data center specifications that went into achieving those results in Prineville.

to help kick off the Open Compute Project, Facebook conducted a tour of the Prineville data center, showcasing the technologies that it used to achieve such a low PUE. the data center has an entire floor devoted to pulling in the outside air, filtering it, cooling and humidifying it, and then sending it down to the floor where the servers are located. there is no ductwork in the data center, instead the air handling system uses a wall of high-efficiency 5hp variable speed fans to create a positive air pressure going into a 14’ plenum above the cold aisles of the data center, minimizing the amount of work required at the server to pull the air past the components.

the upper air handling deck has a series of louvers to control the amount of air pulled into the building and the amount of hot air from the servers that gets exhausted outside or recycled back through the servers.

Generally the MeeFog units operate during the summer, in order to cool the air, but they also can be used during the winter to bring humidification up to the appropriate level to eliminate static electricity.

Interested in learning more about how Meefog Systems is a solution to data center cooling? Click below to learn more.